14 April 2026

Characterising the Views on Safety from Frontier AI Labs: Anthropic and DeepMind

Day 13 of Inkhaven: 30 Days of Posts

Written quickly as part of Inkhaven Residency. Epistemic status: substantially based on vibes and my impressions from a high degree of exposure to the ecosystem. I won't be providing links to evidence for the claims.

I find it interesting to consider the characters of the leaders of the different AI labs, and the public communications of the companies, to get a sense of what the different labs actually care about. This kind of characterisation feels important because it clearly trickles down to their approaches to safety and how the organisations actually behave.

Anthropic

Anthropic have a unique character to their communications. For the most part, it feels like Dario Amodei doesn't put himself in the foreground or impose his views on exactly how safety should be handled. He obviously thinks that it is really important, but the precise details around what it means to do safety well seem to be deferred to other people within the organisation, e.g. Holden Karnofsky, or other people responsible for RSP drafting.

Of course, he is responsible for whether or not Mythos gets deployed, and his choice around that was clearly "we should be careful about releasing this, and it's important that we set a precedent above and beyond the RSP about what we think is responsible." I don't think any company could have reasonably released Mythos, but the handling of it clearly communicates a seriousness about safety and earnestness about doing the right thing.

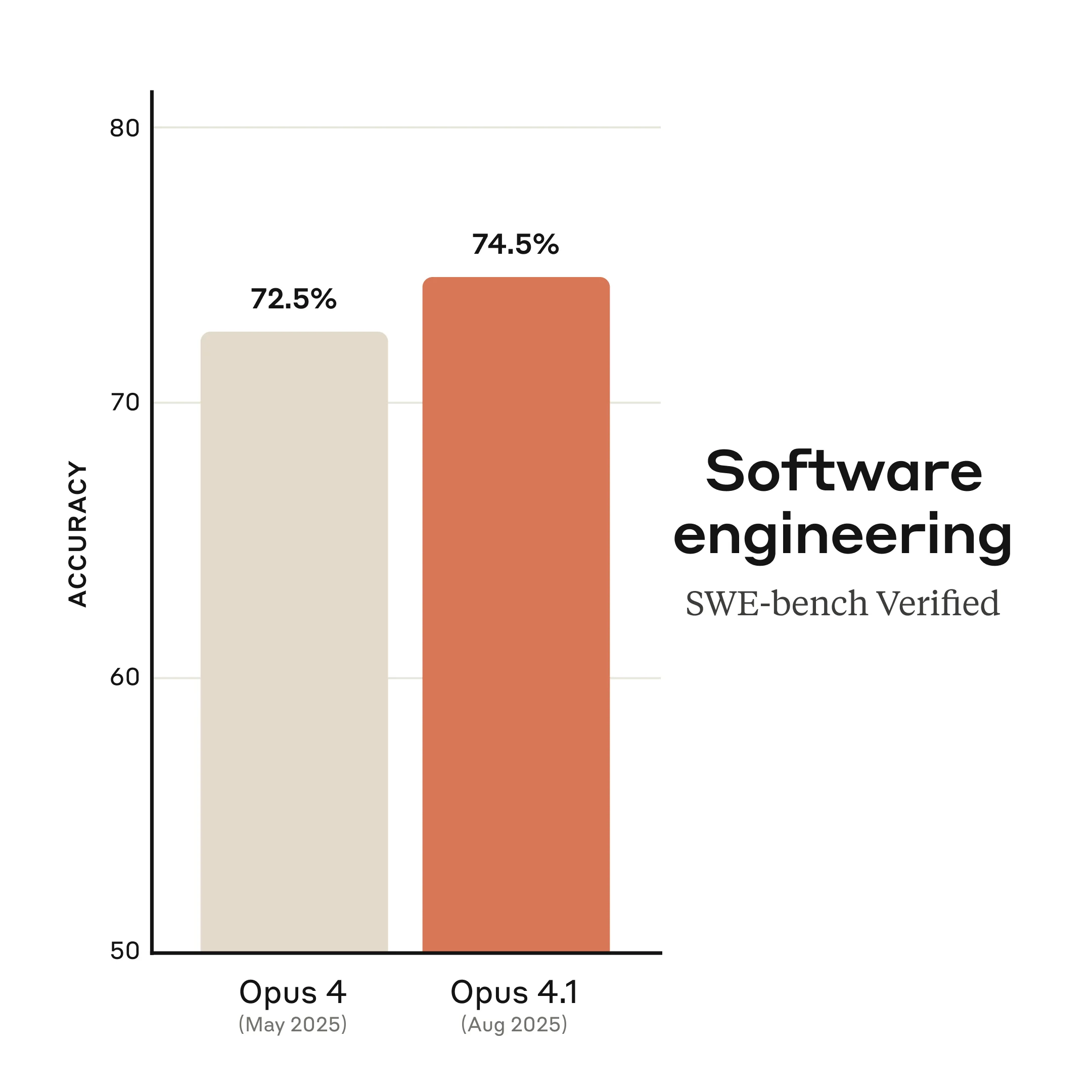

In general Anthropic has a vibe of trying very hard to do the right thing, and has a nerdy attitude of just executing really well and communicating in a way that tends to downplay how good they are, rather than focusing on hype. As an example, look at their marketing for Opus 4.1.

Getting people excited by a 2% increase on a benchmark is definitely a wink and a nod to insiders, in a way that shows how Anthropic think of themselves as the technical person's AI provider.

Google DeepMind

DeepMind are the most mysterious of the AI labs. They seem to have fewer communications than the other labs in general, and it feels like communications are somewhat of an afterthought rather than being baked into the company's DNA the way it is at the other labs. This is probably a result of GDM being highly secretive for most of its existence, with people interviewing at the company in the early 2010s not even knowing exactly what it was doing.

The release of The Thinking Game (a great documentary imo) represents a huge change in the information environment around the company, and exposes a lot of the way that people think about AI within it, particularly its founder Demis Hassabis. GDM is interesting in that it has been working on developing AGI since 2010, five years before OpenAI.

GDM was founded without the inherent paranoia about the technology that can be found at OpenAI and Anthropic. Demis seems to straightforwardly think that developing the technology is a really good idea, that there are risks, but they just need to be managed, and that it will unlock an extraordinary age of human potential. Anthropic by comparison was founded as an AI safety company that does capabilities. Demis takes more of a scientist's perspective, while Dario talks about the risks with more emotional weight, which you can see him wrestling with in his essay Machines of Loving Grace. There's a sense of reluctance in Anthropic, feeling that their hand has been forced by not wanting someone else to be the first to build AGI.

Interestingly, Anthropic and Google DeepMind seem to have the closest relationship of any frontier labs, with Alphabet owning 10% of Anthropic's stock and providing an enormous amount of compute to Anthropic via their TPUs for both training and inference. Overall they have a high degree of alignment in their underlying philosophies and approach.

In Part 2, I look at OpenAI.

Leave a comment

Comments are public. Email will not be displayed publicly.

Questions, thoughts?

No comments yet. Be the first to leave one below.